Next Gen Compute: What's Going to Power the AI Scaleup?

- 24 hours ago

- 7 min read

The AI infrastructure race has moved from silicon to energy, from chips to racks, and from the US to everywhere. And now it’s running into a wall. So what’s going to power the next five years of the AI scaleup?

Written by: George Patin | Research Contributor, London Venture Capital Network

The AI infrastructure buildout has been all over the headlines for over a year now. Endless data centres, Blackwell GPU orders in the tens of thousands, and seemingly never enough compute. New facilities cannot be spun up fast enough. With Nvidia booked through 2026 and data center energy use creeping up towards 10% of all energy supply, compute is becoming a major roadblock to the AI scaleup.

And then there is the physics. Top-of-the-line TSMC chips are likely less than five years out from breaching the 2nm limit. Going below 1nm is not an option, which means a paradigm shift is on the way. Will it be specialised inference compute, systemic efficiency, cardinally new technologies — or a mix of all?

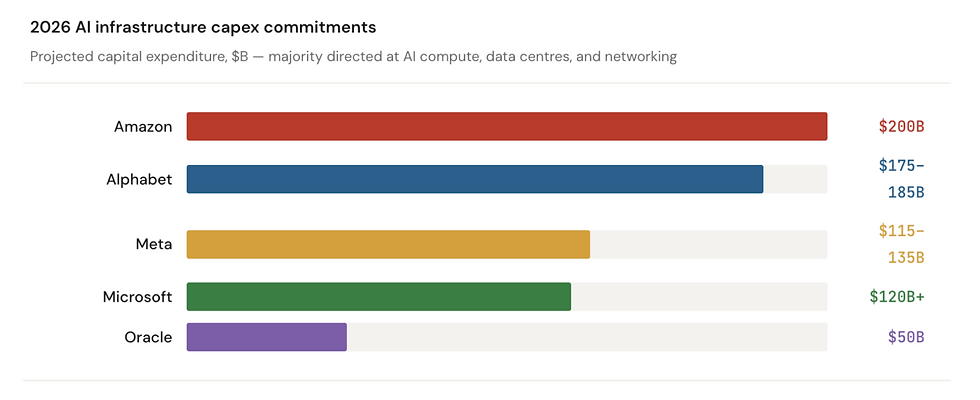

The Scale

The numbers tell the story. Global AI spending is projected to exceed $2.5 trillion in 2026, with more than half flowing directly into foundational infrastructure: servers, accelerators, and data centre platforms. The hyperscaler commitments individually dwarf the GDP of some mid-sized countries.

|

Figure 1, Sources: TechCrunch, Futurum Group, Earnings Data (2026 Q1) |

By mid-2026, more than eighty large-scale AI projects will be under construction simultaneously, with all major players deploying cash in the tens of billions. One thing is clear: even the entire tech sector combined cannot scale up fast enough to meet demand. The question for investors, then, is not whether AI compute demand will grow, but which layers of the stack will capture value as the architecture evolves.

From Training to Inference

Part of the shift happening under the proverbial server canopies is a transition from training to inference. Circa 2023, inference accounted for about a third; the majority of compute was being devoted to training models like GPT-4 and Llama 3 with unprecedented trillion-parameter sizes. That has changed.

|

Figure 2, Sources: TMT Predictions 2026, Deloitte Insights |

Inference compute now accounts for close to two thirds of all deployment. The inference market alone crossed $100bn in value last year, as real-time AI products and agentic workflows scale up.

This matters because training and inference are fundamentally different challenges. Training works in batches, and is the more compute and memory intensive process due to the sheer scale of the model weights. It’s the process that builds the models themselves. Inference on the other hand is far more sensitive to latency and cost-per-watt metrics, and is the process used for deployment.

This difference means chip specialisation. It also means different tech will win in the next wave.

The Hardware Landscape: Who Builds What?

NVIDIA remains the centre of gravity. The Vera Rubin platform is built on TSMC 3nm with 336 billion transistors and delivers 5x Blackwell's inference performance, with a claimed 10x reduction in cost per token. But the most important thing about Vera Rubin is that it isn't a chip — it's a rack. Seven co-designed chips (including the acquired Groq 3 LPX for inference decode) spanning CPU, GPU, switch, NIC, DPU, and Ethernet. In short, NVIDIA has shifted to selling entire systems.

AMD's MI350 has gained traction as the primary alternative, with MI450 "Helios" racks launching Q3 2026 at Rubin-level performance. Every hyperscaler now also designs custom silicon — Google's TPU Trillium, Amazon's Inferentia2, Microsoft's Maia 200, Meta's MTIA — expected to capture 15–25% of the market by 2030, primarily in internal inference.

The truly interesting story for venture, though, is happening at the emerging layer: specialised inference companies, photonics startups, and more long-term quantum bets.

Specialist Inference Silicon

Here is what the specialised inference landscape looks like right now:

|

Figure 3, Sources: Composite data from CrunchBase + Company Announcements. |

Cerebras deserves particular attention. Its Wafer-Scale Engine (WSE-3) is the largest chip ever manufactured: a full 300mm silicon wafer with some 4 trillion transistors. It broke 1,000 tokens per second for Llama 3.1-405B, roughly 75x faster than GPU-based alternatives. In January 2026, OpenAI signed a deal with Cerebras to deliver 750 MW of compute through 2028, valued at over $10 billion. Perplexity and Mistral are active inference customers, with an IPO targeted for Q2 2026.

For European deep tech specifically, Fractile is an interesting spotlight. The Bristol-based startup raised $22.5 million led by the NATO Innovation Fund, with Pat Gelsinger (former Intel CEO) investing personally. Its in-memory compute architecture fuses processing and memory on a single chip, claiming 100x faster inference at one-tenth the system cost. Chip delivery is targeted for 2027. Fractile seems to be the tipping point of another trend: sovereign and defence compute.

Photonics: The Emerging Player

If the energy constraint is the defining challenge, photonics might be part of the answer. Photonic chips, per its name, use light for computation. Co-packaged optics (CPO), which embeds optical conversion directly onto the switch chip, can reduce rack-level power consumption by up to 40%.

|

Figure 4, Source: GVR, Crunchbase funding data. |

Photonic interconnects are a 2026–2028 deployment story: Lightmatter's Passage L200, the world's first 3D CPO product, ships in 2026 and is being integrated with GPU makers for 2027–2028 products. Photonic compute is longer-horizon, but Neurophos ($110M raised) is developing brand new optical processing units slated for deployment sometime post-2027.

Quantum: The Long Arc

Quantum computing has been gaining momentum over the past several years. The honest prospect, though, is that it likely won’t be a major player until well into the 2030s. Key issues like error correction (QEC) and simple scale remain substantial barriers. What’s interesting about quantum, however, is that it’s a fundamentally different kind of compute that excels in highly parallel processes. That in turn opens up potential for hybrid classic-quantum workflows, where GPU clusters are augmented by quantum computers.

NVIDIA has recently built quantum integration into its CUDA-Q platform, and Quantum Machines launched an Open Acceleration Stack in March 2026 integrating GPUs, CPUs, and quantum backends. Hybrid orchestration seems to be the way to go.

|

Figure 5, Source: CBS Insights & SETR 2025. |

Total quantum investment reached $4.2 billion in 2025 (a record), with all-time funding at $11.1 billion. Hardware dominates at 72% of cumulative capital. Key companies span multiple qubit technologies: PsiQuantum ($2.3B total, $7B valuation, photonic), Quantinuum ($600M Series B, $10B valuation, trapped-ion), IQM (€320M, superconducting, Finland), Alice & Bob ($104M, cat qubits, France), Quandela (photonic, France), and PASQAL (neutral-atom, France). A liquidity window is opening: Quantinuum filed a confidential S-1 in January 2026, Infleqtion completed its NYSE listing.

For European investors, the picture mirrors the situation with AI compute as a whole: strong science, weak commercialisation capital. Europe holds world-class quantum positions (IQM systems are deployed in EuroHPC Factories), but the EU receives roughly 5% of global private quantum funding versus 50% for the US. The investable thesis is hybrid quantum-AI infrastructure and the enabling stack rather than direct competition on qubit count. That round goes to Google and Microsoft, for now.

Europe’s Position: Late Start, Structural Upsides

Europe's entire public AI compute capacity — roughly 57,000 accelerators across the EuroHPC network — is an order of magnitude smaller than a single US hyperscaler. American cloud providers hold ~85% of the European cloud market. Few European companies seriously compete at the frontier of AI chip design, if any – though Europe does have ASML to underpin major parts of the fab supply chain.

The picture may have begun to shift, though. AI has become the leading sector for European VC investment, up 75% YoY to reach %17.5 billion, representing about a quarter of all European VC flows. In the first two months of 2026 alone, European AI startups raised more than $9 billion.

At the venture level, the leading European deep tech and AI investors — Atomico ($4.7B AUM), EQT Ventures (€1.1B Fund III), Balderton ($4.5B raised), Earlybird, Elaia, and Partech — are increasingly deploying into AI infrastructure and enabling hardware, not just software. Keen Venture Partners in Amsterdam closed €150 million for what it calls Europe's largest defence-tech VC vehicle, leading with an AI sovereignty thesis. Specialist funds like AI Seed (London, pre-seed/seed AI-only) and Quantonation (Paris, the world's most active quantum investor with 36 rounds) are filling the early-stage gap. The NATO Innovation Fund and European Innovation Council Fund are writing cheques into dual-use hardware companies like Fractile and IQM.

Nvidia itself is the most significant new entrant. It participated in more European AI rounds in 2025 than in all previous years combined — 14, up from seven in 2024. Jensen Huang’s strategy here seems to be investing in his own future customers, as Nvidia has done with other major funding rounds.

So what does the investment landscape look like for Europe?

|

Figure 6, Source: EU Startups, Funding announcements. |

The only major European player when it comes to models is, of course, Mistral AI. A major story in of itself, it will likely spawn an ecosystem around itself: a market for European inference chips, new hardware and infrastructure. Nscale is the other major player here, going from seed to Series C in under 18 months. Mistral itself is increasingly an infrastructure play alongside its model development — €722 million in debt for its Paris data centre, 18,000 Grace Blackwell systems, and a planned 1.4 GW European AI campus with NVIDIA and MGX. Then there are the specialist companies: Corintis with its chip cooling tech, DataCrunch developing sovereign cloud, and others.

On the public side, the EU's AI Factory network (15 facilities, €10B total investment) serves research but not yet commercial scale. The gigafactories, should the construction stick to schedule, will start to come online at the tail end of the decade. NVIDIA is co-investing directly: 10,000 Blackwell GPUs via Deutsche Telekom, 14,000 for UK data centres, ~12,600 GB300s in Portugal, and an AI Factory Lab in Grenoble.

The trajectory for the coming years seems to be a scaleup on the infrastructure angle, fueled by a mix of Nvidia investment, public initiatives and VC flows going to specialist companies. Long-term, the perennial growth capital problem will start to bite. If European companies struggle to scale beyond Series B, that will have negative knock-on effects across the ecosystem. The alternative is also possible though, with the entire ecosystem being pulled upwards by the star success stories of the continent.

The Bigger Picture

Even with every hyperscaler throwing money at the wall, there just isn’t enough compute to go around. We are already seeing the effects: OpenAI’s termination of Sora and Antropic’s tightening of usage limits are the first signs.

The interesting shift is that the scaleup race is no longer just about GPU counts. Specialised tech, from inference and photonics to infrastructure plays, is attracting more and more funding. The defining companies of the next decade are likely raising their seeds and Series As right about now. The window is bound to stay open for a while, perhaps for a couple years.

One thing is clear, though: the momentum has only begun to build.